AI Systems Strategy

From Models to Agents: Why AI Infrastructure Is Becoming the Real Competitive Advantage

As AI systems evolve from simple model calls to autonomous agent workflows, the infrastructure required to run them efficiently is becoming the key differentiator.

Over the past few years, the AI industry has moved at an extraordinary pace. The first wave of generative AI was dominated by breakthroughs in model development—larger models, improved architectures, and better benchmark performance.

Today, however, two important shifts are beginning to reshape the industry:

AI systems are evolving from models to agents.

AI infrastructure is emerging as a key source of competitive advantage.

These two shifts are closely related. As AI applications become more autonomous and complex, the demands placed on the infrastructure running them increase dramatically. What once looked like a race to build better models is increasingly becoming a race to build better systems to operate them.

From Models to Agents

The early phase of generative AI largely focused on the model itself. Companies competed on model size, training dataset, architecture innovations and benchmark performance. The assumption was straightforward: the organization with the most capable model would capture the greatest value.

Today the conversation is expanding beyond individual model calls. Increasingly, organizations are building AI agents that can plane tasks, interact with tools, retrieve knowledge, execute workflows or collaborate with other agent.

In this new paradigm, AI systems move from stateless inference requests to stateful, multi-step workflows. A single user request might trigger a sequence of operations:

multiple model calls

tool executions

database queries

knowledge retrieval

external system interactions

As a result, the complexity of AI applications is increasing significantly.

Why Agents Increase Infrastructure Complexity

Agent-based systems introduce a new level of operational complexity compared to traditional model inference. Instead of a single request-response cycle, AI systems now involve orchestrated workflows that may run across multiple services and resources. For example, an AI agent responding to a request might:

Retrieve relevant knowledge from a vector database

Query multiple tools or APIs

Call several models for reasoning or summarization

Maintain state across multiple steps

Produce a final response

Even a seemingly simple request can trigger dozens of model invocations and tool interactions. This dramatically increases the demands on the underlying infrastructure.

The Infrastructure Challenge

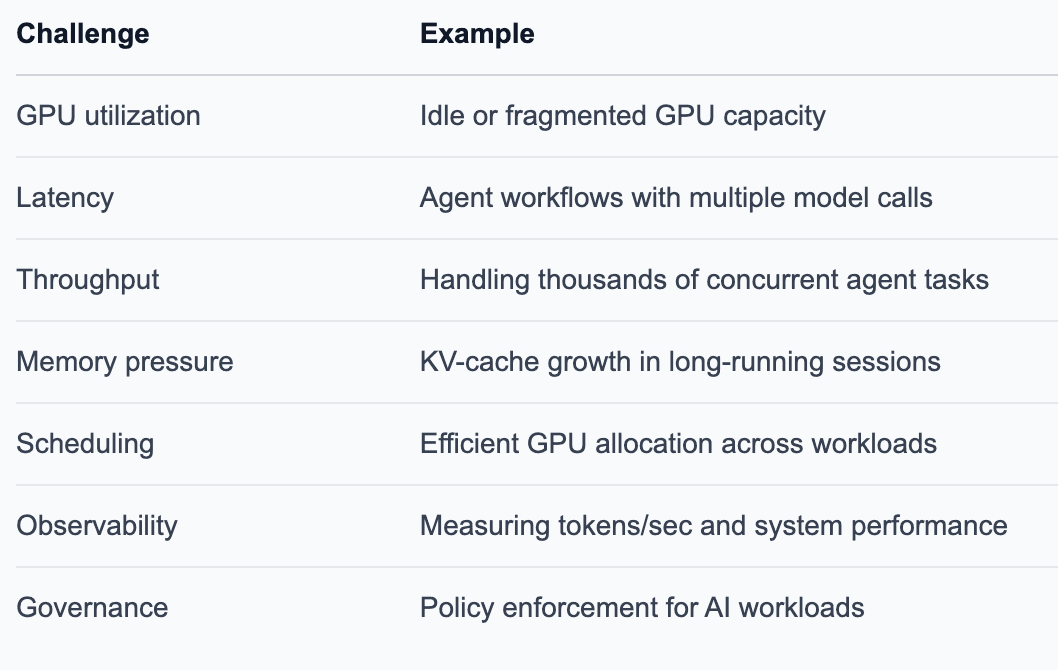

As AI systems scale in production environments, organizations encounter a new class of operational challenges.

These problems are fundamentally infrastructure problems rather than model problems. The more sophisticated the AI application becomes, the more critical the infrastructure layer becomes.

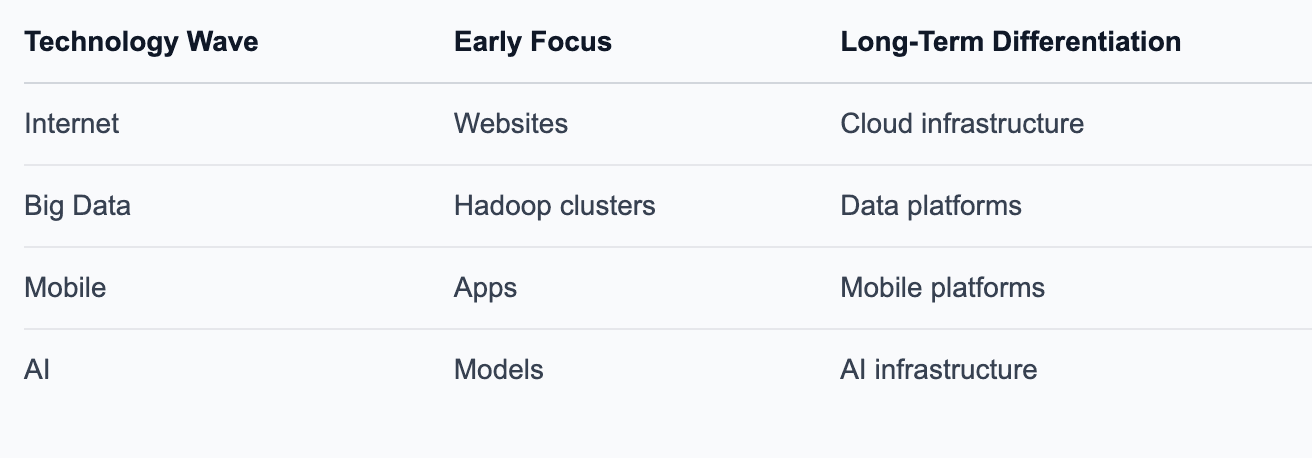

Lessons From Previous Technology Waves

History shows that many major technology waves follow a similar pattern. Early innovation tends to occur at the application or technology breakthrough layer, but long-term competitive advantage often shifts toward platform infrastructure. Examples include:

Infrastructure becomes strategic because it governs performance, economics, and scalability across entire ecosystems. Companies that control the infrastructure layer often shape how the entire ecosystem evolves.

Understanding Competitive Advantage Through the Resource-Based View

To better understand where durable competitive advantage comes from, strategy researchers often turn to the Resource-Based View (RBV) of the firm. RBV argues that long-term competitive advantage arises from resources and capabilities that a firm controls internally rather than simply from market positioning. Examples of such resources include:

proprietary technology

specialized infrastructure

operational expertise

engineering capabilities

organizational processes

The key question RBV asks is: Which capabilities allow a company to outperform competitors in a way that is difficult to replicate?

In the context of AI, the important question becomes: Which capabilities will create a durable advantage as the industry evolves from models to agent-based systems? To evaluate this, strategy research often uses the VRIO framework.

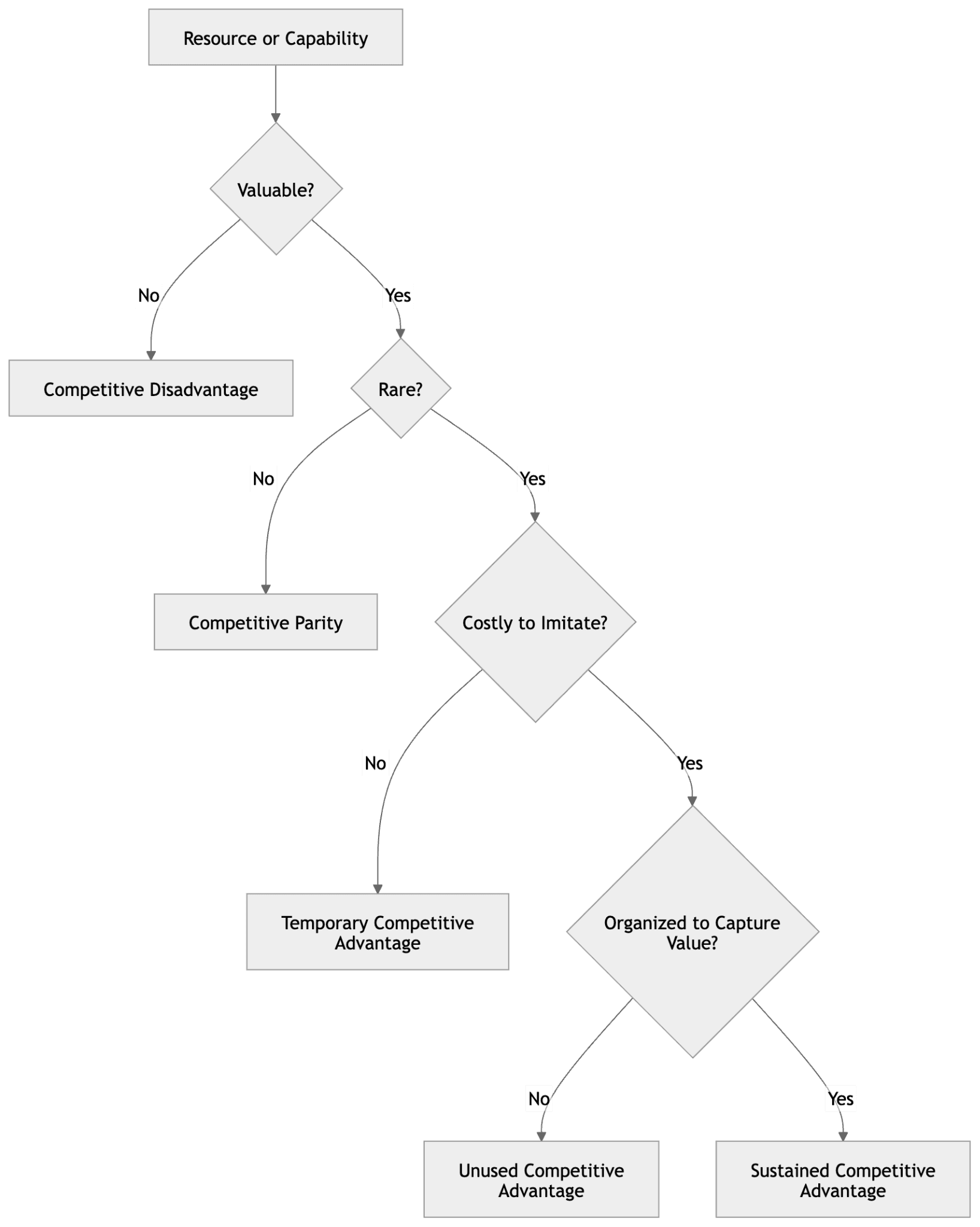

Evaluating AI Capabilities Through the VRIO Framework

The VRIO framework evaluates whether a resource can produce sustained competitive advantage. VRIO stands for:

Value — Does the capability create economic value?

Rarity — Is it scarce among competitors?

Imitability — Is it difficult to replicate?

Organization — Is the company structured to capture the value?

When all four conditions are satisfied, a capability can create sustained competitive advantage.

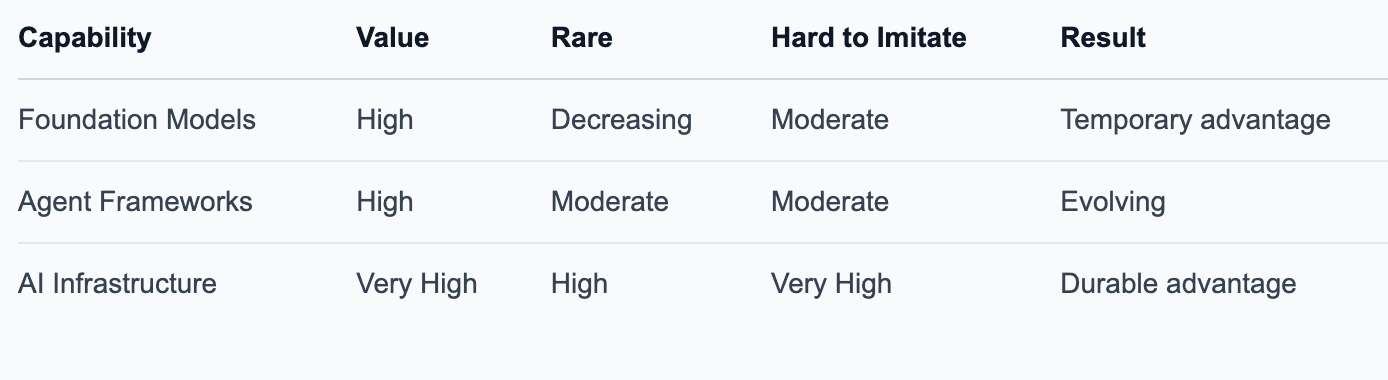

Applying VRIO to the AI Landscape

Applying the VRIO framework to AI capabilities reveals an interesting pattern.

Foundation models remain valuable, but they are becoming increasingly accessible through open models, hosted APIs, and fine-tuning platforms. Infrastructure, however, has characteristics that make it a powerful competitive advantage:

it touches every workload

it controls operational cost

it improves with scale and operational data

it becomes deeply embedded in production systems

These properties make infrastructure significantly harder to replicate once an organization develops expertise and operational maturity.

The Emerging AI Infrastructure Stack

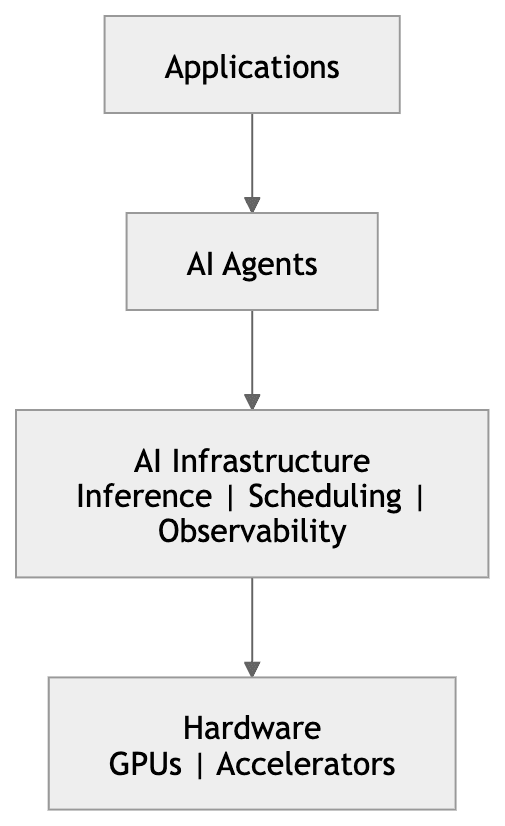

As AI systems evolve toward agent-based architectures, several new infrastructure layers are emerging.

Inference infrastructure

model serving

batching

memory optimization

runtime efficiency

GPU orchestration

multi-tenant GPU scheduling

predictive capacity scaling

resource fragmentation control

Agent infrastructure

tool execution environments

workflow orchestration

agent identity and permissions

AI observability

token usage tracking

GPU utilization metrics

latency and throughput monitoring

Together, these layers form the operational backbone of modern AI systems.

What This Means for the Next Phase of AI

The next phase of AI development may be shaped by two forces working together:

Agents will drive application innovation. Infrastructure will determine operational success.

As AI systems become more complex and autonomous, the infrastructure supporting them will increasingly determine cost efficiency, system performance, scalability and reliability. In other words, the durable competitive moat may lie not just in what AI systems can do, but in how efficiently they can run.

Closing Thought

The AI revolution is often framed as a race to build better models. But the industry is beginning to recognize that models alone are not enough. As AI evolves from models to agents, the systems required to operate those agents at scale will become increasingly important.

The next frontier of AI may not simply be smarter models—but smarter infrastructure.